Overview

Our research focuses on the design and analysis of quantum algorithms, with an emphasis on near-term applicability and connections to the natural sciences. We develop methods for device-independent certification, explore the structure of Bell correlations in many-body systems, and work on the theory and implementation of tensor networks. We also investigate distributed quantum computing, quantum machine learning, scalable certification of many-body properties, quantum metrology, and entanglement theory, alongside broader contributions to the quantum information community.

Our vision is to make real-world quantum computing practical by integrating theory, experiment, and applications. Our research efforts are embedded into the Applied Quantum Algorithms – Leiden initiative, a collaborative effort to advance quantum technologies through interdisciplinary approaches.

Quantum algorithms and applications

Ground-state preparation. We proposed a general-purpose quantum algorithm to prepare ground states from a trial state, building on recent advances in solving the quantum linear systems problem. Our method achieves exponential improvement in precision and reduced ancilla overhead compared to phase estimation, making it especially appealing for early quantum devices1 .

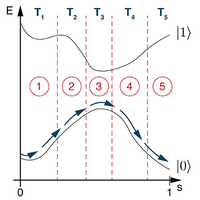

Adiabatic spectroscopy and variational methods. We introduced a hybrid algorithm combining adiabatic evolution with variational optimization. The method yields spectral information and reduces total evolution time, making it suitable for systems with limited coherence2 . This approach has found use in recent experiments with Rydberg atoms. A related strategy based on echo verification was later proposed to mitigate coherent non-adiabatic transitions3 .

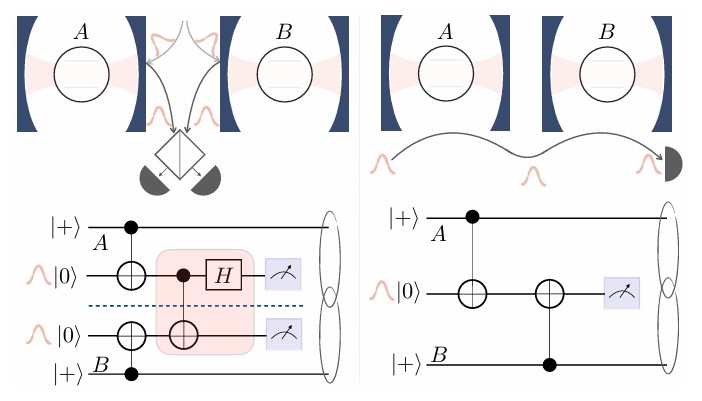

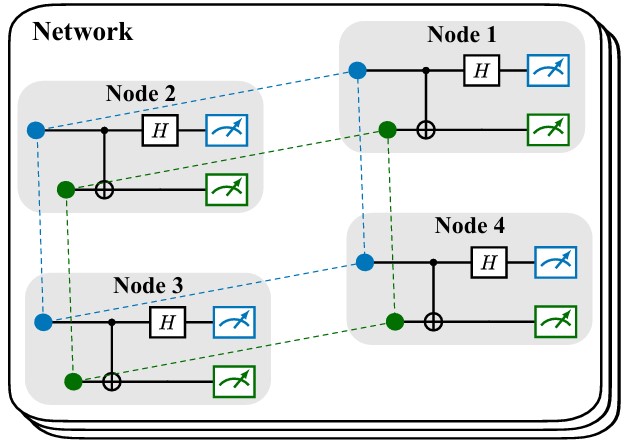

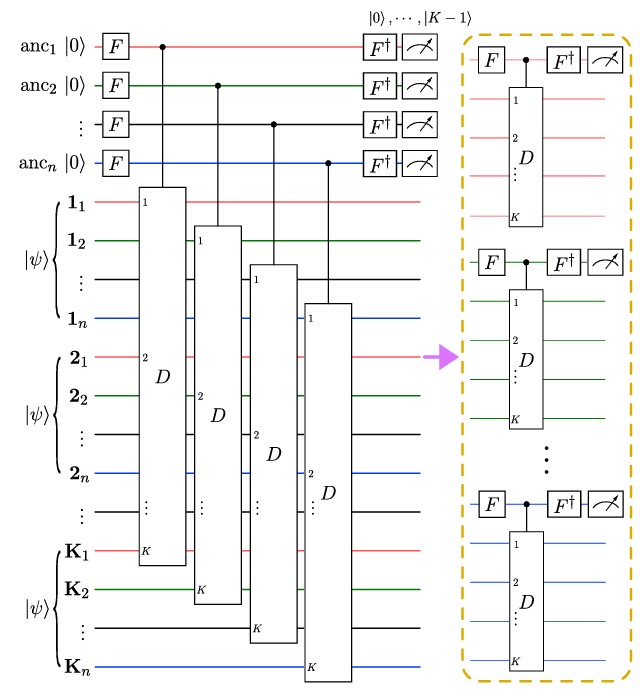

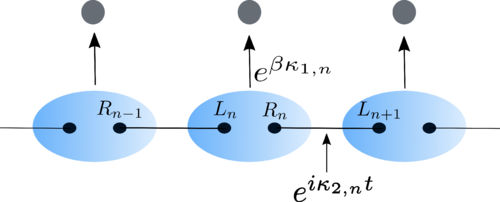

Quantum algorithms tailored to distributed architectures. We proposed hybrid methods to prepare low-energy eigenstates efficiently in networked or modular quantum devices. Notably, we developed techniques that leverage a coherent link to improve eigenstate preparation4 , and introduced a distributed protocol for preparing low-variance states5 . These developments are inspired in part by our contributions to the SuperQuLAN project. We have also reviewed the broader landscape of distributed quantum information processing6 (see also the dedicated distributed quantum computing section below).

Quantum differential equation solvers. We extended variational techniques to solve both ordinary and stochastic differential equations. This includes an analysis of VQAs inspired by Runge–Kutta methods7 , and a continuous-variable quantum algorithm for solving ODEs8 .

Quantum algorithms for near-term devices. We contributed to algorithm design for NISQ-era platforms, focusing on reducing sampling costs and enhancing parameter tuning. This includes optimal parameter shift rules9 and SDP-based noise mitigation for overlapping tomography10 . A broader perspective on these challenges and opportunities was laid out in our early programmatic work11 .

Shallow circuits, divide & chop. We investigated shallow quantum circuits as approximators for complex quantum processes12 , studied multi-basis representations13 , and analyzed circuit-cutting bounds14 .

Distributed Quantum Computing

A natural path toward useful quantum computation is to network several modular devices rather than scale a single monolith. Our work in this direction spans algorithms, protocols, and surveys, often informed by our involvement in the SuperQuLAN initiative.

A panoramic survey. Our recent review traces the state of the art of distributed quantum information processing, from quantum-network primitives to distributed algorithms and the experimental platforms most likely to host them17 .

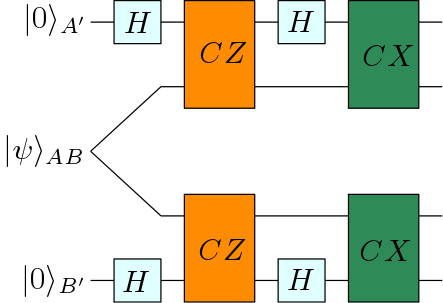

Characterizing distributed states by Bell sampling. We proposed efficient protocols to characterize graph-diagonal noisy states shared across the nodes of a quantum network using Bell-basis measurements18 , enabling scalable benchmarking of network states without global tomography.

Efficient entanglement detection via symmetries and permutation tests. We introduced an efficient measurement protocol for multipartite entanglement based on testing the action of permutations on copies of the state19 , and generalized the concept of concentratable entanglement via parallelized permutation tests20 . These methods provide scalable benchmarks of multipartite correlations in regimes where full-state tomography is out of reach.

Reinforcement learning for entanglement distribution. We used reinforcement learning to optimize entanglement distribution policies in quantum repeater networks under realistic communication delays21 , showing the advantage of interpretable and locally coordinated strategies over naive baselines.

Device-Independent Quantum Information Processing

We develop methods to verify quantum states, devices, and correlations in a device-independent fashion. This includes techniques for self-testing, randomness certification, and entanglement depth estimation, all relying only on observed statistics and minimal assumptions.

Self-testing protocols. We contributed to the development of self-testing techniques for certifying quantum states and operations based solely on observed outcomes. This includes the SATWAP inequality for maximally entangled states22 , robust self-testing of quantum assemblages23 , and approaches based on mutually unbiased bases24 and graph states25 . We also contributed to the generalization of SATWAP for GHZ states of arbitrary local dimension26 . More recently we tailored Bell inequalities to the qudit toric code, obtaining self-testing statements for topological code states27 .

Randomness certification. We study the certification of quantum randomness in device-independent and semi-device-independent settings, identifying the minimal quantum resources required for secure randomness generation. We showed that in multi-input/output Bell scenarios, Bell nonlocality is not always sufficient to certify randomness, establishing a separation between the two concepts31 . We also provided necessary and sufficient conditions for randomness certification based on measurement incompatibility structures, linking randomness generation to hypergraph properties32 . Early ideas on the relation between randomness and nonlocality were already explored through monogamy constraints in non-signaling theories33 .

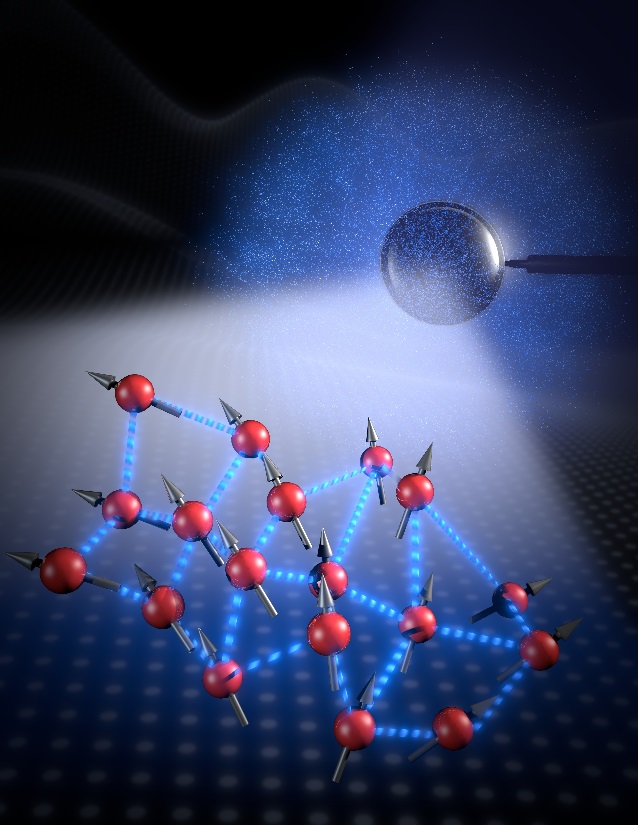

Bell Correlations in Quantum Many-Body Systems

This line of work began during my PhD36 and remains a cornerstone of my research. It seeks to characterize and detect Bell correlations in large quantum systems using accessible observables such as low-order correlators or the energy. These correlations defy explanation by local hidden variable models and underpin device-independent quantum information.

Few-body Bell inequalities and experimental detection. We introduced permutationally37 38 and translationally39 invariant Bell inequalities built from one- and two-body correlators, laying the foundation for scalable detection schemes. These methods enabled the first detection of Bell nonlocality in many-body spin systems, including a Bose-Einstein Condensate of 480 atoms and a thermal ensemble of 5×105 atoms. More recently, Bell correlations were observed in spin systems beyond the Gaussian regime40 .

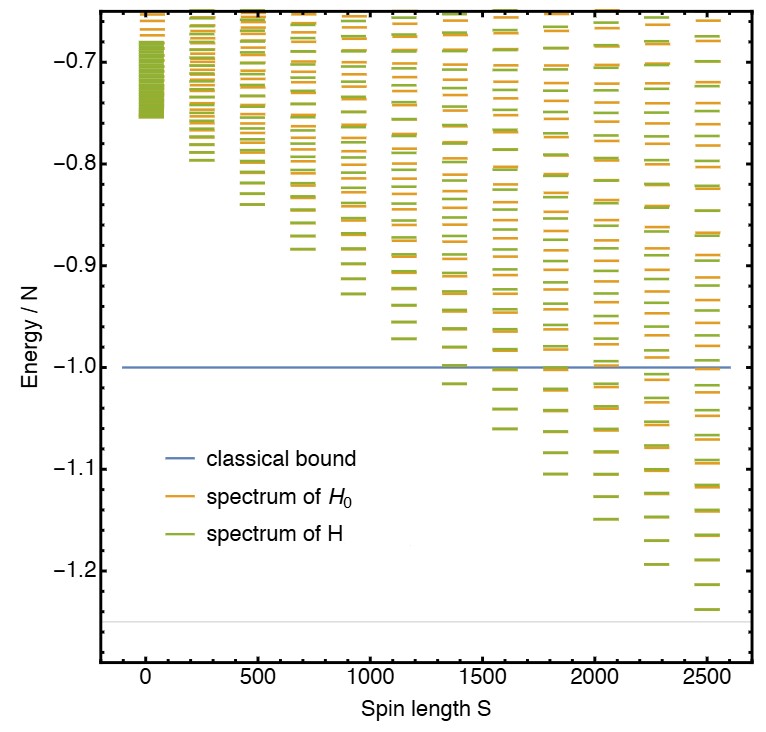

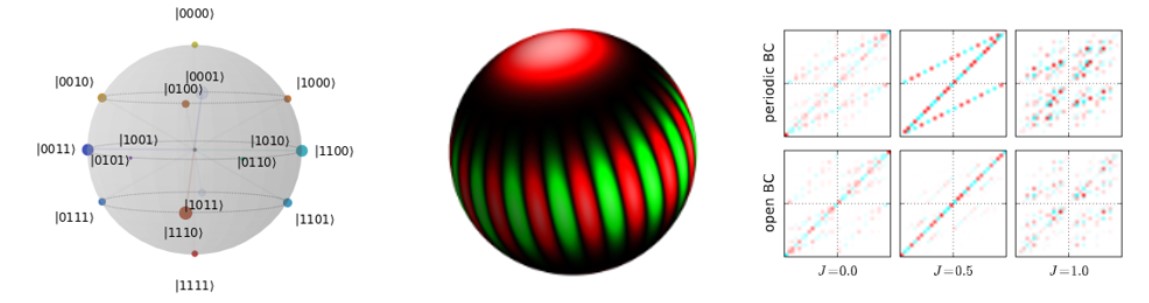

Energy-based Bell witnesses. We showed that the ground state energy of certain spin Hamiltonians can serve as a Bell witness, enabling detection of nonlocality through energy measurements41 . The technique leverages convex optimization and dynamic programming for tight classical bounds. More recently, we proposed new optimization tools based on tropical algebra and tensor network contractions42 43 , studied topological effects on Bell nonlocality44 , and developed strategies for operator bouncing between Bell operators and inequalities45 . We have also looked at stoquastic permutationally invariant Bell operators46 and at the optimization of quantum violations for multipartite facet Bell inequalities47 .

Semidefinite relaxations of local sets. We proposed a hierarchy of semidefinite programs to approximate the set of local correlations from the outside, enabling benchmarking of experimental data against few-body permutationally invariant Bell inequalities48 . These tools become indispensable when tackling richer scenarios, such as those with more outcomes or higher dimensions. We extended this framework in a tutorial on deriving three-outcome Bell inequalities49 and applied it to dimension witnessing and spin-nematic squeezing in many-body systems50 .

Temperature and quantum chaos. We showed that Bell correlations can persist at finite temperature in spin systems with infinite-range interactions51 , with spin-squeezed states as optimal witnesses. More recently, we proposed a Bell inequality for three-level systems and linked its violation to suppressed spectral signatures of quantum chaos52 , highlighting connections with random matrix theory; a work we dedicated to the memory of Fritz Haake.

Tensor Networks and Spectral Methods

Preparation and certification. We explored protocols for the adiabatic preparation and verification of tensor network states in quantum devices53 . Our approach provides efficient semidefinite programming tools to certify spectral gaps along the adiabatic path and introduces a complete set of verifiable observables for scalable fidelity benchmarking in many-body quantum simulations.

Adiabatic preparation and gap engineering. We developed a method to maximize the spectral gap in adiabatic protocols by optimizing over parent Hamiltonians, enabling faster preparation of tensor network states without altering their ground state54 . The approach applies to both injective and non-injective tensors and is demonstrated on paradigmatic examples like the AKLT and GHZ states.

Spectral gap certification. We introduced a hierarchy of semidefinite programs that yield rigorous lower bounds on the spectral gap of frustration-free Hamiltonians in the thermodynamic limit55 . Our method generalizes and improves upon existing finite-size bounds, offering tighter certificates and extending the regime where a gap can be provably detected.

Quantum Machine Learning

Training under realistic noise. We studied how machine-learning surrogates can be trained from data produced by noisy quantum algorithms, in particular learning density functionals for the Fermi-Hubbard model from samples drawn at NISQ-typical noise levels58 . Neural-network models proved able to generalize from surprisingly small noisy datasets, partially mitigating the heavy sampling overhead that limits quantum-data acquisition in current hardware.

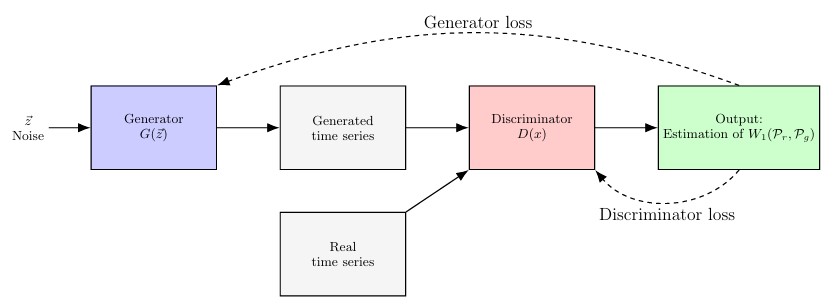

Continuous-variable and generative learning. We developed nonparametric methods to learn non-Gaussian quantum states of continuous-variable systems from measurement data59 . On the application side, we used parameterized quantum circuits as quantum generative models for financial time series with temporal correlations60 , illustrating how PQCs can capture non-trivial classical distributions of practical interest.

Certifying Many-Body Quantum Properties

Quantum marginal problem and variational constraints. We investigated the quantum marginal problem in symmetric systems and its implications for variational optimization, self-testing, and nonlocality61 . The results offer structural insights into feasible marginals and global state compatibility.

Machine learning for certification. We explored how machine learning tools can assist in certifying properties of complex many-body systems, offering data-driven certificates of entanglement or local constraints62 .

Quantum Metrology

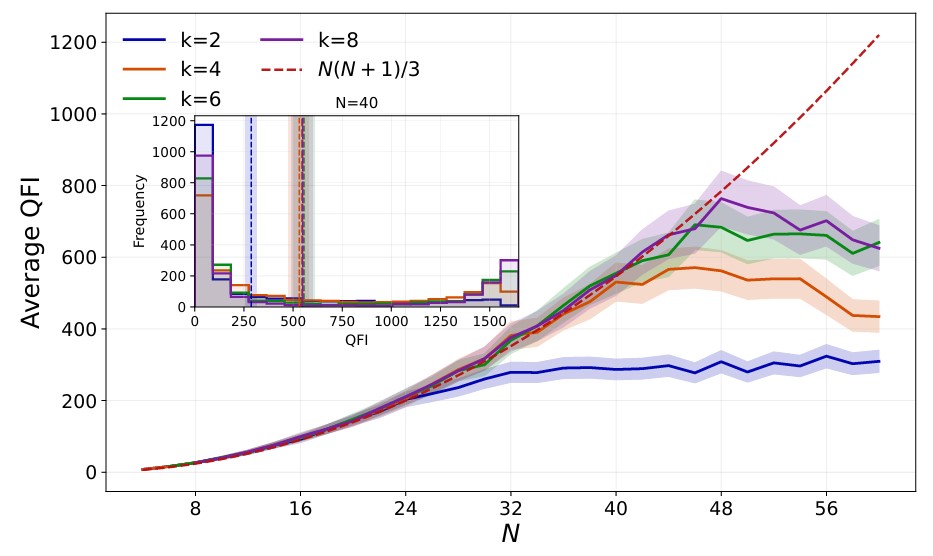

Many-body ground states as metrological probes. We have begun connecting our many-body and certification toolset to quantum metrology, showing that k-local ground states of suitably engineered Hamiltonians can serve as resources for unitary quantum metrology63 . This line complements our work on many-body Bell correlations, where Bell witnesses derived from spin-squeezed states are themselves metrological figures of merit.

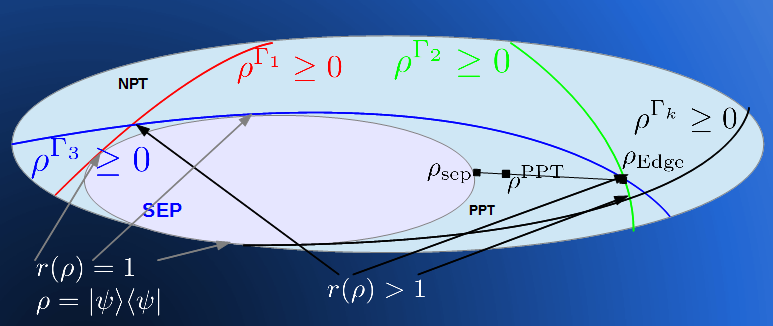

Entanglement Theory

A panoramic review on symmetric quantum states. Several lines of work below converge in our recent invited review for Reports on Progress in Physics64 , which surveys the mathematical structure and physical properties of permutationally invariant quantum states and the techniques used to characterize their entanglement, nonlocality, and tomographic structure.

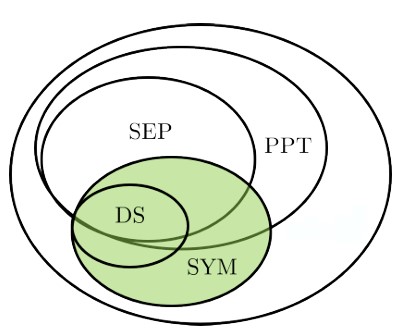

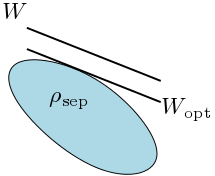

Diagonal symmetric states and conic geometry. We explored the entanglement structure of diagonal symmetric states, showing that testing separability in this class is NP-hard and naturally framed as a quadratic conic optimization problem67 . Later, we established a link between these states and copositive matrices, connecting entanglement theory to non-decomposable witnesses and conic duality68 .

Optimality of entanglement witnesses. Our early research explored decomposable entanglement witnesses and their relation to completely entangled subspaces and unextendible product bases69 . We later applied these tools to test optimality and contributed to the disproof of the Structural Physical Approximation conjecture70 .

Entanglement vs Nonlocality. We demonstrated that entanglement and nonlocality are fundamentally inequivalent for any number of parties, highlighting the subtleties between these key concepts71 .

Structural tools for multipartite entanglement. We developed linear map techniques to certify entanglement and compatibility properties in many-body systems72 , and introduced the concept of unextendible product operator bases as a generalization of UPBs to operator spaces73 . We also used analytic combinatorics to characterize sector length distributions of recursively definable graph states74 , linking entanglement structure to underlying graph topology.

Quantum Thermodynamics

Foundations of quantum thermodynamics. We explored the fundamental limits of energy extraction from quantum systems, including the role of entanglement in defining passive states and their maximal energetic properties75 . This work reveals structural constraints on thermodynamic tasks at the quantum level.

Thermalization and open dynamics. We investigated the onset of thermalization in quantum many-body systems through the lens of Lindbladian dynamics and graph-theoretic techniques. Our results provide constructive bounds on convergence and thermal behavior in both classical and quantum regimes76 .

Outreach and Broader Contributions

The BIG Bell Test. I actively contributed to The BIG Bell Test, a global experiment involving more than 100,000 participants to close the freedom-of-choice loophole in Bell tests by using human randomness77 . We designed both outreach material and infrastructure to integrate human choices into quantum experiments worldwide.

Science writing in IMTech. I contributed a pedagogical article on Bell correlations in quantum many-body systems to the IMTech Newsletter. This piece aims to make recent developments in nonlocality and symmetry accessible to a broad STEM audience, including undergraduate students and educators.

Visualizing multi-qubit states. We proposed the Bloch Sphere Binary Tree representation to visualize sets of multi-qubit pure states on a single diagram, making it easier to analyze their evolution and entanglement structure78 . The accompanying open-source Python library enables interactive use in quantum education and research.

News & Views: Insights into Quantum Computing.

Our News & Views article in Nature Physics critically examines recent experimental demonstrations claiming quantum advantage. We highlight a tensor network algorithm that challenges these claims by efficiently simulating optical quantum experiments on classical hardware79 , based on the original study.

Our Viewpoint in Physics discusses how realistic imperfections can actually simplify the classical simulation of quantum computers, potentially reducing the resources required. This insight significantly influences ongoing quantum computational research80 , based on the original research.

Our recent contribution to Nature Computational Science explores advanced parallelization techniques for tensor network contraction. This method significantly enhances the speed of classical simulations of quantum systems, thus broadening computational possibilities81 , based on the original publication.

A Christmas story about quantum teleportation. We contributed a playful piece connecting quantum teleportation to the logistics of gift delivery, using Santa Claus as a vehicle to explain key ideas in quantum communication and entanglement82 .